Risk Management in the Age of Digital Disruption

Today, as the repercussions of the Fourth Industrial Revolution begin to take hold, many corporations are fighting hidden risks that are engendered by the obsolescence of their cost structures, outdated business platform and practices, market evolution, client perception and pricing pressures that demand some form of action. But change is often a difficult choice as it implies a certain degree of unknown risk.

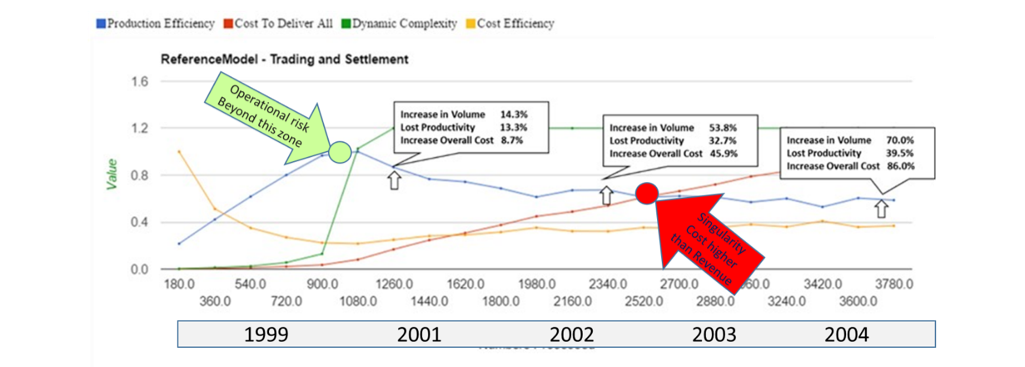

Current risk management practices, which deal mostly with the risk of reoccurring historical events, cannot help business, government or economic leaders deal with the uncertainty and rate of change driven by the Fourth Industrial Revolution. As new innovations threaten to disrupt, business leaders lack the means to measure the risks and rewards associated with the adoption of new technologies and business models. Established companies are faltering as leaner and more agile start-ups bring to market the new products and services that customers of the on-demand or sharing economy desire—with better quality, faster speeds and/or lower costs than established companies can match.

Due to the continuous adaptations driven by the Third Industrial Revolution, most organizations are now burdened by inefficient and unpredictable systems. Even as the inherent risks of current systems are recognized, many businesses are unable to confidently identify a successful path forward.

PwC’s 2015 Risk in Review survey of 1,229 senior executives and board members, reports that 73% of respondents agreed that risks to their companies are increasing. However, the survey shows companies are not, largely, responding to increasing threats with improved risk management programs. While executives are eager to confront business risks, boost management effectiveness, prevent costly misjudgments, drive efficiency and generate higher profit margin growth, only an elite group of companies (12% of the total surveyed) have put in place the processes and structures that qualify them as true risk management leaders per PwC’s criteria.

The shortcomings of traditional risk management technologies and probabilistic methodologies are largely to blame. According to Delloitte’s 2015 Global Risk Management Survey, 62% of respondents said that risk information systems and technology infrastructure were extremely or very challenging. Thirty-five percent of respondents considered identifying and managing new and emerging risks to be extremely or very challenging.

To remain competitive, companies must pursue two parallel strategies:

- Building agile and flexible risk management frameworks that can anticipate and prepare for the shifts that bring long-term success.

- Building the resiliency that will enable those frameworks to mitigate risk events and keep the business moving toward its goals.

While many risk management and business management experts agree on the need for better risk management methods and technologies, their proposed use of probabilistic methods of risk measurement and reliance on big data cannot fulfill the risk management requirements of the twenty-first century.

Many popular analytic methods are supported by nothing more than big data hype, which promises users that the more big data they have, the better conclusions they will be able to ascertain. However, if the dynamics of a system is continuously changing, any analysis based on big data will only be valid for the window of time during which the data was captured. Outside this window of time, an alignment with reality is unlikely.

To respond to the rate of change engendered by the Fourth Industrial Revolution, the practice of risk management must mature and become a scientific discipline. Our work with clients and research exposes the failures of traditional risk management practices. But many are not aware there is a better way to discover and treat risk. Ultimately, we will need more collaborators and partners who wish to teach business leaders and risk practitioners how new universal risk management approaches and mathematical-based emulation technologies can be used to identify new and emerging risks and prescriptively control business outcomes. If you are interested in joining our cause, please contact us as we are always looking for new research, technology and service partners.